Can Gemma 4 Beat Gemini 3.1 Pro at Coding?

Is a $20/month Google AI Pro account worth it versus running Gemma 4 31B on OpenRouter pay-as-you-go? This Ship-Bench run was designed to answer that question across a realistic coding workflow rather than a single coding prompt.

Hypothesis: Gemini's larger model size would show clear advantages over Gemma's smaller 31B parameters especially when it comes to working through problems.

Key Insights

Gemini finished with an 86.6 average across the five roles and passed 4 of 5 gates, while Gemma finished at 72.4 and only passed 2 of 5.

Gemma actually led the raw Architect and UX scores, but still failed the Architect gate because exact versions were not pinned to the latest frameworks.

The biggest separation showed up in execution and verification: Gemini scored 93.3 in Developer versus Gemma's 58, and 72 versus 37 in Reviewer.

Gemini is currently an unusually strong value on AI Pro, but the more durable market-rate comparison is roughly $5.05 for Gemini versus $0.85 for Gemma on OpenRouter-equivalent pricing.

Setup

Both runs used the same machine, the same runtime family, the same benchmark task, and the same Ship-Bench version (v1). The main difference was the harness and provider setup, which matters because operator experience and tool behavior can shape outcomes even when the benchmark target stays constant.

Environment

| Item | Value |

|---|---|

| Machine | Windows 11 |

| Runtime | Node v24 |

| Ship-Bench repo | ship-bench v1 |

| Benchmark task | Simplified knowledge base app |

Run configuration

| Item | Gemini run | Gemma run |

|---|---|---|

| Harness | Gemini CLI 0.38.2 | GitHub Copilot CLI 1.0.34 |

| Model | Gemini 3.1 Pro | Gemma 4 31B |

| Backend | Google AI Pro account | OpenRouter |

| Run repo | Gemini branch | Gemma branch |

Judge configuration

| Item | Value |

|---|---|

| Judge harness | Claude Code |

| Judge model | Opus 4.7 Medium |

| Evaluation mode | LLM judge plus human review |

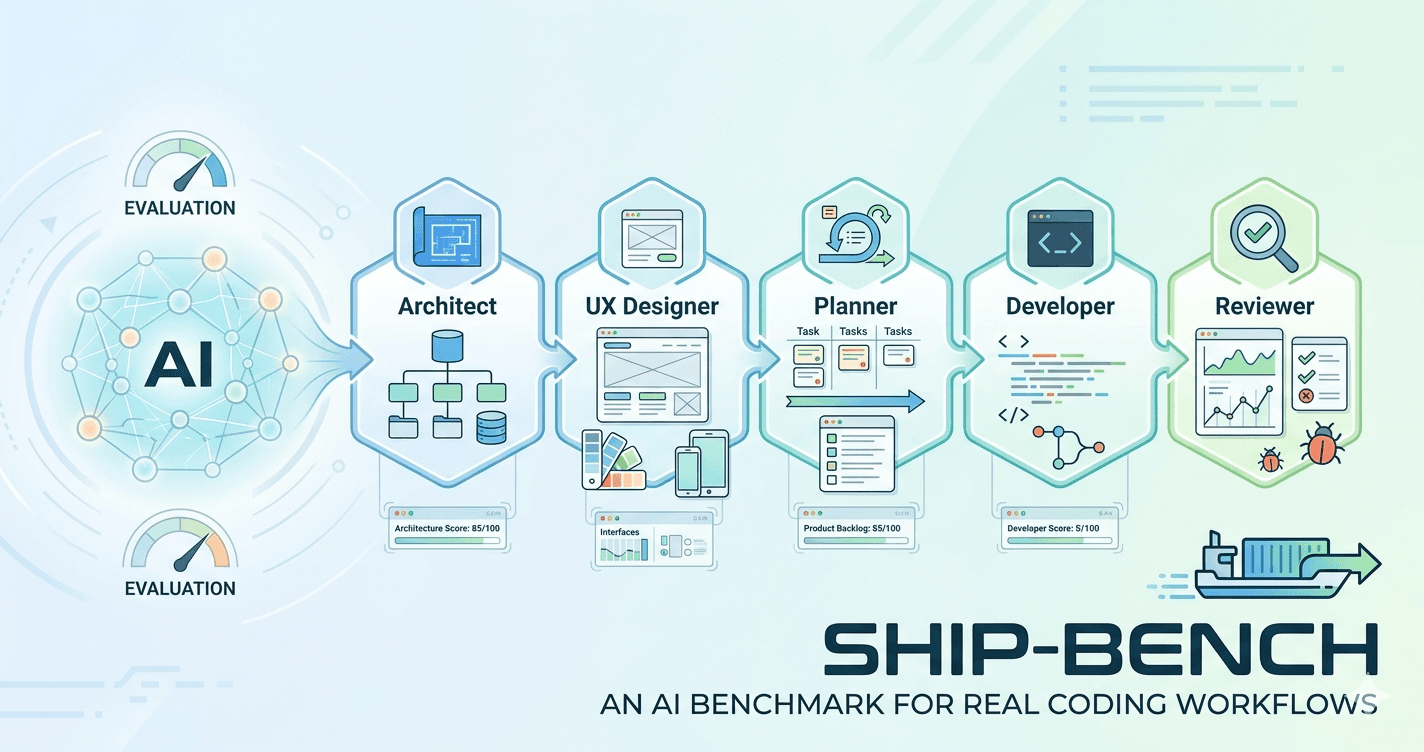

Ship-Bench Context

Ship-Bench evaluates models across five SDLC roles: Architect, UX Designer, Planner, Developer, and Reviewer. Each role produces artifacts that feed the next stage, making the benchmark useful for measuring both isolated output quality and handoff quality across a realistic workflow.

This run used the standard simplified knowledge base app task. That task is large enough to expose differences in architecture, planning, implementation, and QA without becoming too open-ended to compare cleanly across runs.

Overall Results

| Metric | Gemini 3.1 Pro | Gemma 4 31B |

|---|---|---|

| Architect | 87.2 | 92.2 (FAIL gate) |

| UX Designer | 89.5 | 94.6 |

| Planner | 91.1 | 80.0 (FAIL gate) |

| Developer | 93.3 | 58.0 (FAIL) |

| Reviewer | 72.0 (FAIL gate) | 37.0 (FAIL) |

| Average score | 86.6 | 72.4 |

| Passes | 3/5 | 2/5 |

Gemini was more dependable across the full workflow. Gemma looked competitive early, but the later-stage failures were severe enough to erase that advantage in practical terms.

Architect

The architect stage tests whether the model can turn the product brief into a concrete technical plan with clear decisions and minimal unresolved ambiguity.

| Metric | Gemini 3.1 Pro | Gemma 4 31B |

|---|---|---|

| Score | 87.2 | 92.2 |

| Pass | Yes | No |

| Output | Gemini architecture | Gemma architecture |

| Eval | Gemini eval | Gemma eval |

LLM judge summary: Gemma scored higher on design quality and ergonomics, but failed the mandatory Frameworks gate because it used generic “Latest” placeholders instead of exact version pins. Gemini passed with slightly lower raw score because of some nitpicking of the LLM judge.

Human notes: Both chose SQLite plus Prisma for a good local-first developer experience, but neither specified what a deployed database path should look like, so both would have needed follow-up prompting there. Testing strategies were broadly similar, backend and data choices were nearly identical, but the front-end architecture showed a real difference: Gemma defaulted to a standard Next.js plus Tailwind stack, while Gemini simplified to vanilla CSS in a way that felt more thought-through for the actual backlog. Gemma's outdated framework assumptions are also a meaningful practical issue, especially if version drift is already a known complaint with LLMs.

UX Designer

The UX stage evaluates whether the design direction is specific enough to guide implementation, including flows, states, layout decisions, and interaction details.

| Metric | Gemini 3.1 Pro | Gemma 4 31B |

|---|---|---|

| Score | 89.5 | 94.6 |

| Pass | Yes | Yes |

| Output | Gemini UX spec | Gemma UX spec |

| Eval | Gemini eval | Gemma eval |

LLM judge summary: Both passed. Gemma scored slightly higher because it was a bit more complete on states and accessibility detail, while Gemini was still fully usable and implementable.

Human notes: Gemma did a bit better describing screen routes by user flow, but Gemini's version was still perfectly functional. Gemini also put more thought into the interactions themselves, even if both specs largely covered the same interaction set.

Planner

The planner stage tests whether the model can convert prior artifacts into an executable delivery sequence with sensible task sizing and dependency order.

| Metric | Gemini 3.1 Pro | Gemma 4 31B |

|---|---|---|

| Score | 91.1 | 80.0 |

| Pass | Yes | No |

| Output | Gemini backlog | Gemma backlog |

| Eval | Gemini eval | Gemma eval |

LLM judge summary: Gemini produced better-scoped vertical slices and passed the planner gates. Gemma failed because its task structure relied too much on horizontal slicing and deferred testing until the end and some imbalance in the iterations.

Human notes: This is where Gemini's stronger reasoning started to matter more. Both understood scope and dependencies well, but Gemma's sequence of Foundation → Browsing → Editing → Testing left both unit and end-to-end testing to the final iteration, which created imbalanced iterations and caused rework in iteration 4. Gemini's sequence of Base/Foundation → Browsing/Viewing → Editing → Searching felt more realistic and better balanced.

Developer

The developer stage measures whether the model can implement the assigned backlog into a working MVP while staying aligned to the earlier artifacts.

| Metric | Gemini 3.1 Pro | Gemma 4 31B |

|---|---|---|

| Score | 93.3 | 58.0 |

| Pass | Yes | No |

| Output | Gemini source | Gemma source |

| Eval | Gemini eval | Gemma eval |

LLM judge summary: Gemini delivered a working MVP with verified browse, search, and edit flows. Gemma's implementation failed on a broken Prisma import that caused 500 errors and prevented the write path from functioning correctly.

Human notes: Both models needed some operator intervention around interactive commands like create-react-app and Playwright setup. The practical difference is that Gemini mostly sailed through implementation after that, while Gemma could not get the newer Prisma version working, downgraded it, never got Playwright green, and left a critical bug on the edit article page that required manual fixing.

Reviewer

The reviewer stage closes the loop by checking whether the built MVP actually satisfies the brief, specs, and implementation plan.

| Metric | Gemini 3.1 Pro | Gemma 4 31B |

|---|---|---|

| Score | 72.0 | 37.0 |

| Pass | No | No |

| Output | Gemini QA report | Gemma QA report |

| Eval | Gemini eval | Gemma eval |

LLM judge summary: Both reviewer runs failed gates, but in very different ways. Gemini's failure was relatively minor and came from missing screenshots, attached evidence, and other verification artifacts despite catching real defects. Gemma's reviewer missed the app-crashing Prisma import entirely, marked broken flows as PASS without browser verification, and made a ship recommendation on a non-functional app.

Human notes: Gemini's stronger reasoning showed up again here: it found one major issue and several minor ones, but none blocked primary functionality. Gemma never got the Playwright tests running, did not work around that limitation, and missed the critical showstopping bugs altogether.

Gate Failures

| Model | Role | Gate failure |

|---|---|---|

| Gemini 3.1 Pro | Reviewer | Evidence gate — no screenshots, coverage report, or attached logs despite otherwise sound defect detection. |

| Gemma 4 31B | Architect | Frameworks gate — no exact versions, “Latest” placeholders, and outdated assumptions on version currency. |

| Gemma 4 31B | Planner | 70% good chunks gate — horizontal slicing and late testing caused poor iteration quality. |

| Gemma 4 31B | Developer | MVP flows and critical bugs gates — broken Prisma import caused 500s and blocked key flows. |

| Gemma 4 31B | Reviewer | Flows, Defects, and Evidence gates — the reviewer missed critical failures and did not verify runtime behavior. |

Token and Cost Analysis

The quality difference matters, but cost is the practical question behind this comparison.

| Metric | Gemini AI Pro (effective) | Gemini OpenRouter equivalent | Gemma OpenRouter |

|---|---|---|---|

| Total tokens | 2.35M | 2.35M | 6.43M |

| Estimated cost | ~$0.13 | $5.05 | $0.85 |

| Cost per average point | $0.0015 | $0.058 | $0.012 |

Gemini is currently a great value on AI Pro at roughly \(0.13 effective for this run based on the observed request budget, but that pricing environment should not be assumed to last as providers reduce quotas and raise prices. The more durable comparison is the retail-style one: about \)5.05 for Gemini versus $0.85 for Gemma, which makes Gemma far cheaper but also much weaker once the workflow reaches implementation and QA.

App Comparison

The benchmark scores matter most, but screenshots still help reveal polish and coherence that score tables do not fully capture.

Screenshots

Gemini 3.1 Pro

Gemma 4 31B

| View | Gemini app | Gemma app |

|---|---|---|

| Home page | article_list.png | articles.png |

| Search results | search.png | search.png |

| Article detail | article.png | article.png |

| Article editor | article_edit.png | article_edit.png |

Subjective UX review

Both models produced broadly similar flows, which is expected given the task and specs. The main visual difference is that Gemini went very lean and content-forward, while Gemma inherited baseline Tailwind styling that felt slightly less aesthetic in practice.

Both apps would have benefited from wireframes earlier in the process. There were also some obvious missed touches on both sides, such as stronger search calls to action, although Gemma at least added a “Clear search” option that Gemini lacked.

Interpretation

This run suggests that Gemini's deeper reasoning matters most once the workflow stops being about drafting and starts being about sequencing, implementation, recovery, and verification. Gemma stayed competitive in the earlier specification-heavy stages, but the later breakdowns show that a cheaper model can still become expensive if it burns cycles on rework or misses critical issues.

That does not mean Gemma has no place. With tighter task definitions and more explicit setup constraints, it could still make sense as a lower-cost option for spec-heavy work or coding loops where the operator is willing to be more hands-on.

Verdict: Gemini 3.1 Pro

Gemini showed that deeper thinking is vital for coding workflows in this benchmark. It produced the more reliable end-to-end result and delivered a working MVP across the SDLC handoffs that matter most.

Gemma was much cheaper on a market-rate basis and looked competitive in the early roles, but it broke down where the benchmark became most operationally demanding. With more upfront work to make task definitions crisper, Gemma may still be a sensible way to save money on coding loops, but this run did not show it as the better full-workflow option.